Many teams did not often use code reviews, but, as it became known about their positive impact, more and more groups began to apply this practice, every day benefiting from its many advantages. Here we will try to talk about why code reviews are incredibly useful and share the experience of their use in our company.

We will talk about our experience with a tool like Reviewdog. In the following articles, we will discuss the topic of code analysis in more detail and consider the central analyzers.

What is Statistical Code Analysis?

With static code analysis, the program analyzed without its actual execution, and with dynamic analysis, everything happens during execution. In most cases, static analysis means analysis performed using automated tools of source or executable code.

Historically, the first tools of static analysis, often using the word lint in their name, were used to find the most uncomplicated defects in a program. They used a simple search for signatures; that is, they found matches with existing signatures in the scan database. They are still used and allow you to identify "suspicious" constructs in the code that may cause the program to crash when executed.

Code review is one of the most useful defect detection methods. It consists of carefully reading the source code together and making recommendations for improving it. While reading the code, errors, or sections of code that may become erroneous in the future are revealing. Also, the code's author during the review should not explain how or that part of the program works. The algorithm should be understandable directly from the program text and comments. If this condition is not performing, then the code should be finalized.

On the one hand, I want to review the code regularly. On the other hand, it's too expensive. Static code analysis tools are a compromise. They process the source code of programs and give developer recommendations to pay increased attention to specific code sections. Of course, the program does not replace a full review of the code executed by a team of developers. However, the combination benefit-to-price ratio makes using static analysis a beneficial practice for many companies. We should also understand that all automatic reviews depend on the rules of teamwork. Each team has its standards for writing code, and we need to check what the development team chooses.

And here are some tasks that are solved by static code analysis programs. Dividing into three categories:

Identification of potential errors in programs.

Code design guidelines. Some static analyzers allow you to check whether the source code complies with the code design standard adopted by the company. I include controlling the number of indents in various designs, using spaces/tabs, and so on.

Counting metrics. A software metric allows you to get the numerical value of a specific property of the software or its specifications. There is a large variety of metrics that can calculate using some other tools.

There are other ways to use static code analysis tools. For example, static analysis allows us to track and train new employees who are not yet sufficiently familiar with the rules of programming in a company.

Static analysis has been increasingly using in verifying the properties of software used in high-reliability computer systems that are especially safety-critical. It is also applying to search for code that potentially contains vulnerabilities, sometimes called Static Application Security Testing or SAST. Static analysis is used continuously for critical software in the following areas:

Medical device software

Software for Reactor Protection Systems

Aviation software in combination with dynamic analysis

The software in road or rail

But for our team, all the software that we develop is critical and is why we use analyzers on all projects that we are taking into work.

Strengths and Weaknesses of Statistical Code Analysis?

Static analysis has its strengths and weaknesses. But it is essential to understand that there is no ideal method for testing programs. For different classes of software, different techniques will give different results. A high-quality program can only achieve using a combination of different techniques.

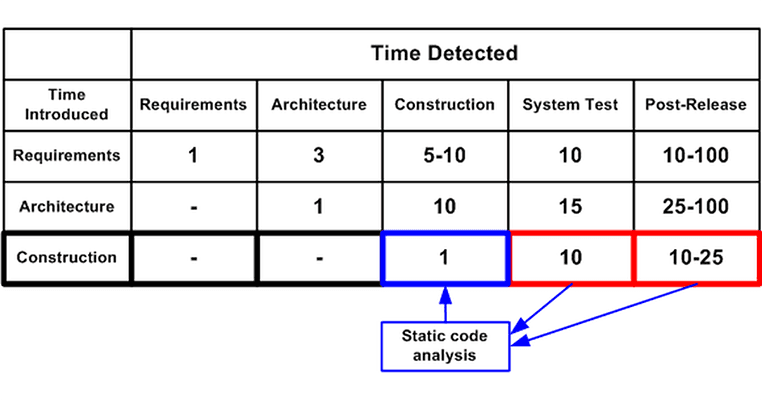

The main advantage of static analysis is the possibility of a significant reduction in the cost of eliminating defects in the program. The earlier the error identifies, the lower the cost of fixing it. So, according to the data provided in Steve McConnell’s book "Code Complete", fixing a mistake at the testing stage will cost ten times more than at the developing stage.

The сost of fixing defects depending on the time of their introduction and detectionSource: "Code Complete" by Steve McConnell, 2004

Strengths of static code analysis:

Static analyzers completely cover the code and check even those code fragments that receive control extremely rarely. Such code fragments, as a rule, cannot be tested by other methods. It allows you to find defects in rare event handlers, in error handlers, or the logging system.

Static analysis is independent of the compiler used and the environment in which the compiled program will run. Thus, you can find hidden errors that can occur only after a few years. For example, these are errors of uncertain behavior. Such errors can manifest when changing the compiler version or when using other keys to optimize the code.

Typos and the consequences of using copy-paste can be easily and quickly detected. As a rule, finding these errors in other ways is a waste of time and effort. It' st a shame after an hour of debugging to find the fault lies in an expression of the form "strcmp (A, A)". When discussing common mistakes, such as blunders, as a rule, are not remembered. But in practice, significant time is spent on identifying them.

Weaknesses of Static Code Analysis:

Static analysis is generally weak in diagnosing memory leaks and parallel errors. Part of the program must be running virtually to find mistakes. Also, such algorithms require a lot of memory and processor time. As a rule, static analyzers limiting to the diagnosis of simple cases. A more effective way to detect memory leaks and parallel errors are to use dynamic analysis tools.

Also, such programs warn of suspicious places. It means that the code can be entirely correct. It is called false positives. Only the developer can understand whether the analyzer indicates an error or issue a false positive. The need to view false positives takes up working time and weakens attention to those parts of the code where errors exist.

How well the code would not work, but you need to know and understand that programs always have errors. It is not necessary to think that rules, recommendations, and tools exist only for beginners. All professionals write only clean and beautiful code. It is not so. There are general rules. They should not be neglected, use a combination of different elements to work with your code only then you can be sure of its cleanliness:

create coding standards that all developers on the team adhere to

review code for at least the most important sections and sections written by new employees

make static and dynamic code analysis

use testing as TDD, BDD, DDD etc.

We do not urge you to start using all of the above immediately. In different projects, something will be more useful, something less. The main thing is to use a reasonable combination of techniques. Only this will improve the quality and reliability of the code.

How does the use of analyzers affect the business as a whole?

The Cisco code review study in an article here shows that developers should check less than 200-400 lines of code at one time to be effective. The ability to detect defects is also reducing. You should get a 70–90% yield, with the review, spread over no more than 60–90 minutes. That is, if there were ten defects, you would find from 7 to 9 of them.

A graph follows this rule in a figure, which displays the density of defects depending on the number of lines of code considering. Defect density equals the number of errors found per 1000 lines of code. The defect density drops when the number of lines of code discussed above goes beyond 200.

A reviewer who finds more errors will be more effective. But if we give more verification code, its effectiveness will decrease. Most likely, with large volumes of time for review, there will be less, so the efficiency will be lower.

As one of our own examples, it's a two-year project AdOptimizer in which we have more than 12k lines of Ruby code. To manually review the code once, we need to spend around 24 hours extra, which is 3-4 days of the developer's work. It will cost around 1000 EUR. Usually, complex projects have a continuous need to review the code every day. Everyday review of changes will require around 1 hour of team leader time, and 5 hours per week, 24 hours per month. So if you have a six months project, it will cost you around 6000 EUR. With automatic code review, you can spend such 6K EUR on new features instead of manual tasks.

What tools do we use for code analysis?

We are in our company using a great tool named Reviewdog for such automatic code review and analysis. Such a tool allows you to automatically publish reports in the GitHub repository by integrating with any linter tools. If findings are in the diff of patches to review, it uses an output of lint tools and posts as a comment. Reviewdog also supports running in the local environment to filter an output of lint tools by diff.

Well, let's take a look at real-life examples of using Reviewdog.

It is an example of how we are using it in our Pull Requests. You can just copy-paste the above configuration to .github/workflows/reviewdog.yml and work with your Ruby on Rails project!

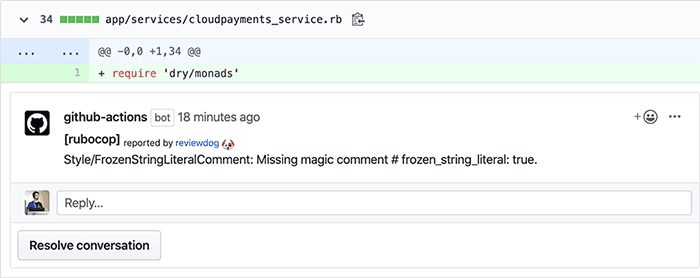

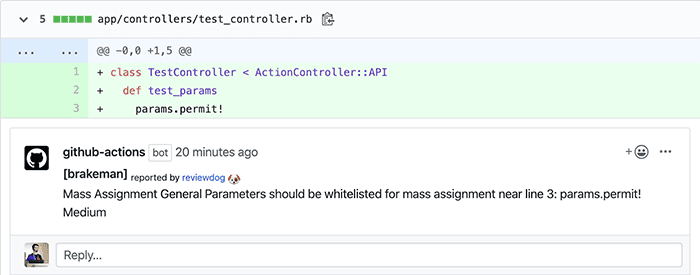

Such an example will produce comments similar to the next ones.

This comment comes from arubocop.

Such a report message was receiving frombrakeman.

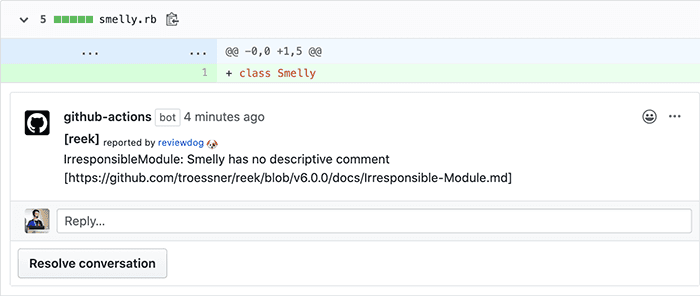

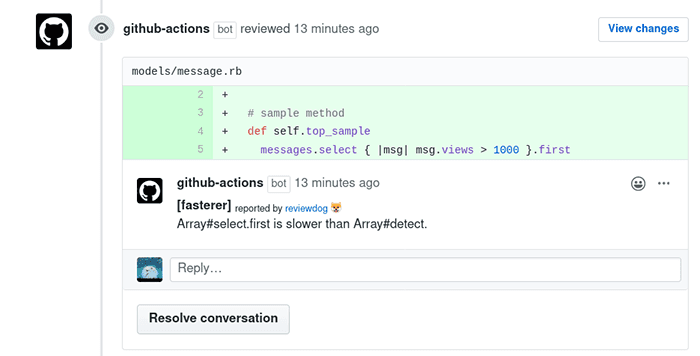

This action runsreekto improve code complexity.

By this comet, we automatically know that developers need to usedetectinstead ofselect.firstfor better performance.

Other Overview Actions

We showed you some examples. But you can install and use Reviewdog in GitHub Actions for your projects. Indeed, Reviewdog is already using by many projects, including Javascript, Android, Rust, CommonLisp, as well as other arbitrary languages and tools.

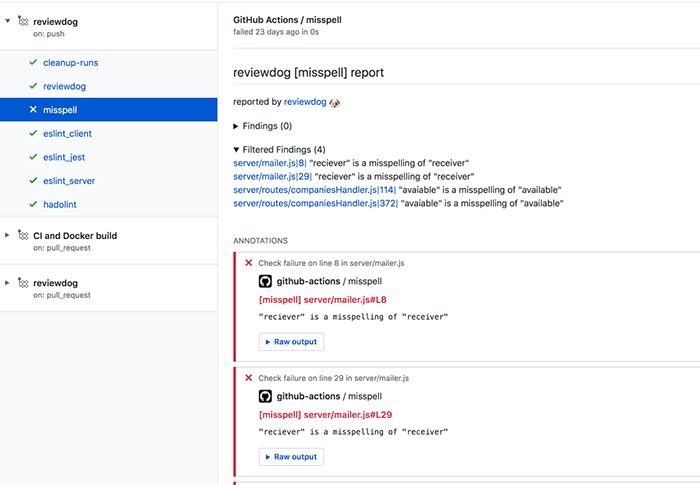

Let take a look at the GitHub docs and reviewdog/action-misspell for examples of creating custom Reviewdog actions.

As you can see, Reviewdog is a great tool and helps a lot to make code better in case of quality and performance.

Summary

Implementation of static code analysis tools on your project can significantly improve the development process by increasing the overall quality of the code. Also, increase the speed of several stages of the development process, especially code review, and help your colleagues grow as developers, as they will see and apply the best practices laid down in the analyzer.

In this article, we looked at the operation of the Reviewdog tool. In the following blog posts, we will show examples of other analyzers work and expand on the topic of code review in more detail.

SHARE ON SOCIAL MEDIA